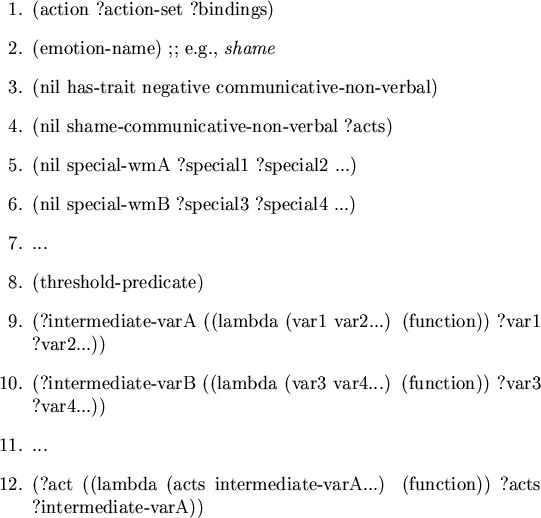

-

Roughly, a motivator is derivative if it is explicitly derived

from another motivator by means-ends analysis and this origin is recorded

and plays a role in subsequent processing. A desire to drink when thirsty

would be nonderivative, whereas a desire for money to buy the drink would

be derivative.

The affective reasoner is concerned only with manipulations of the

nonderivative motivators which lead directly to the emotion inference

mechanism.

Among the emotion-reasoning systems, the Affective Reasoner and

ACRES may be characterized as approaches that deal with the representational

issues of emotions proper, and of the processes that generate them. By

contrast OpEd, SIES and AMAL may be seen as more general approaches that

have an emphasis on reasoning within the object domain about

situations that give rise to emotions. BORIS and THUNDER sit somewhere in

the middle. In other words, the Affective Reasoner and ACRES focus on the

nonderivative motivators which lead to emotions whereas the other systems

have varying degrees to which they also concentrate on the nonderivative

motivators. Note also, that while the Affective Reasoner does little

reasoning within the object domain itself, it does have an associated system

for indexing stories in an object domain according to the underlying

initiators of emotions (see [10]).

In this sense it is similar to SIES.

Of these programs, only ACRES gives any treatment at all to the

biological systems involved in the production of emotions, and then only

cursorily. The remainder (the Affective Reasoner included), only deal with

the cognitive aspects of emotions.

The earliest well-known computer model of emotion reasoning was

Kenneth Colby's PARRY [5], a program that mimicked the behavior

of a schizophrenic paranoid personality. A concise account of PARRY is

provided by Ortony [17] who writes,

- Colby's program, PARRY, accepted English-like input and responded as

a patient in a psychiatric interview by attempting to construe inputs as

directly or indirectly indicative of malevolence. If malevolence was

detected, one or more of three affective responses fear, anger, or

mistrust was triggered, depending upon the nature of the construed

malevolence. Physical malevolence induced fear, psychological malevolence

anger, and the induction of either also induced mistrust. The linguistic

output was designed to offset the construed malevolence either through

counterattack or withdrawal. In all cases, the nature of the construals and

of the responses was determined by the constantly updated values of a number

of key variables, including those for fear, anger, and mistrust.

The Affective Reasoner, like PARRY, also models agents who ``have''

emotions. In addition, both systems maintain internal dynamic states, which

affect the way in which the modeled agent(s) construe the perceived world

and manifest the emotions resulting from those construals. Starting with a

psychological model, PARRY attempted to model a true paranoid personality.

By contrast, the Affective Reasoner uses an ad hoc theory of

rudimentary personality representation designed to be broadly applicable

rather than focused on a specific psychological syndrome.

More recently, another system, BORIS [8], analyzed text

for affective responses to goal states and interpersonal relationships. In

this system, Dyer used ACE structures (Affect as a Consequence of Empathy)

to account for a number of inference mechanisms that included

affective content. In particular he developed the idea that understanding

affect can allow one to produce a causally coherent analysis of stories. In

BORIS, Dyer does not make a distinction between principles, goals and

preferences. This is important because the Affective Reasoner uses this

distinction to resolve some ambiguities. For example, a person may turn

himself in after committing a crime because it upholds a standard of

honesty, even though it clearly violates his goal of being free. Such

conflict between goals and principles are a common theme of human emotional

life and give us important leverage for reasoning in the affective

domain.

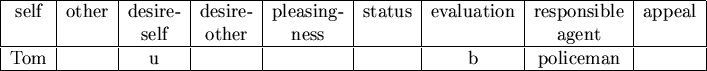

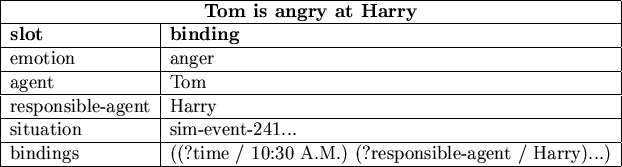

Dyer uses a six-slot frame structure to represent his ``affects''.

By contrast, the Affective Reasoner uses a hierarchy of loosely-structured

frames to represent interpretation schemas, and a simple nine-attribute

relation (called the Emotion Eliciting Condition Relation) to represent

emotion types. There are a number of reasons this latter approach is better

suited to the type of reasoning we do. In the first place, frame hierarchies

allow for the use of slot inheritance. This is useful when specifying the

interpretation schemas used for appraising emotion eliciting situations.

That is, properties may be shared by many interpretation schemas and yet can

be specified once in an ancestor, and then inherited by children frames. For

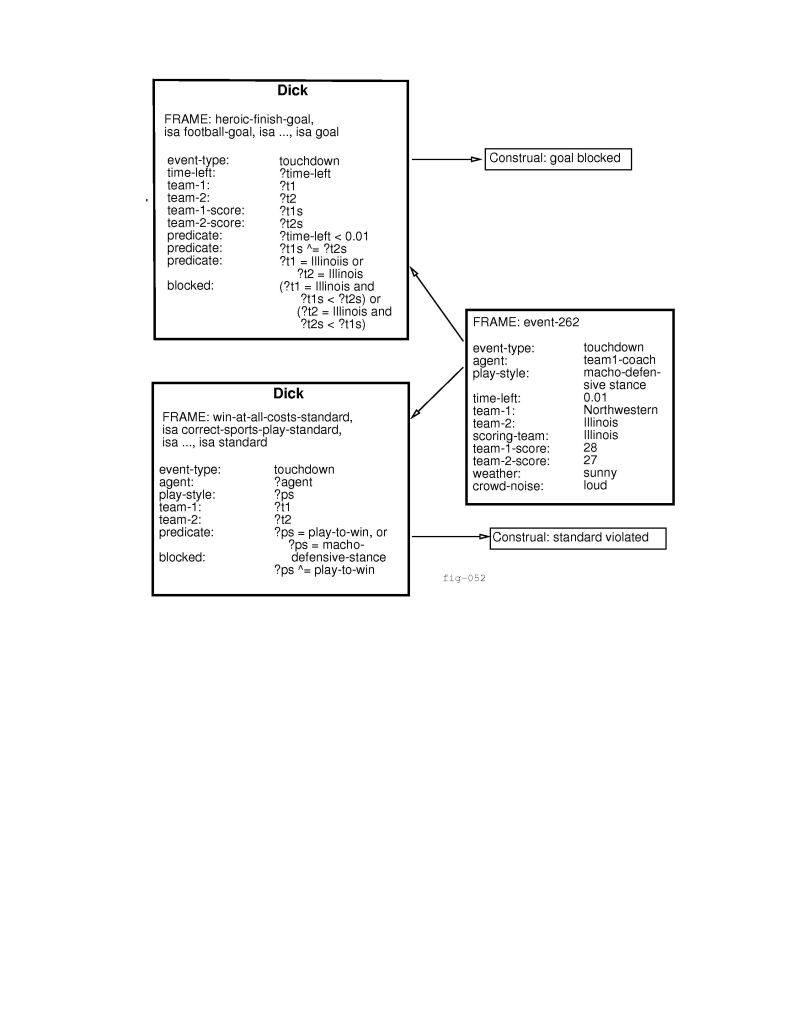

example, when a team scores a touchdown during a football game it may elicit

emotions in the fans. However, much of what defines this as an emotion

eliciting situation has to do with the nature of games in general, and at an

even higher level, with entertainment. Interpretation schemas may inherit

slots that specify the associated goals as entertainment

goals instead of health goals, and descriptive slots such as team-that-scored-the-goal, etc. The use of frames allows a great deal of

efficiency in making use of the system's stored knowledge about situations.

The frames used in this flexible representation of an agent's

interpretation schemas should not be confused with the simple relations

representing the emotions. Both BORIS and the Affective Reasoner use such

relations to represent emotions, although interestingly BORIS represents

these relations as frames. Here there is no room for inheritance: each

attribute of the tuple is specified and has an associated value. To give

inheritance to such an emotion specifier is to imply that one emotion is a

child of some other emotion, and, as such, derives value from the parent.

(Such a position is viable, but it is not one taken either by the Affective

Reasoner or BORIS.)

One result that the BORIS work shares with the Affective Reasoner

and the work by Ortony, et al. [18] is in suggesting valid, but

generally unnamed, emotion types. These arise when certain configurations of

the attributes in the Emotion Eliciting Condition Relations (to use the

Affective Reasoner's term) seem to give rise to a recognizable, but unnamed

set of emotional states in the agent. Dyer refers to the case where  feels negative toward

feels negative toward  as a result of a goal failure caused by

as a result of a goal failure caused by  and

gives the example of a woman who felt foolish and castigated herself for

having had her purse stolen by a thief [8]. Ortony, et al.

identify the class of emotions that arise when one's fears have been

confirmed. They call these, appropriately, the fears-confirmed type

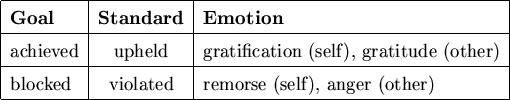

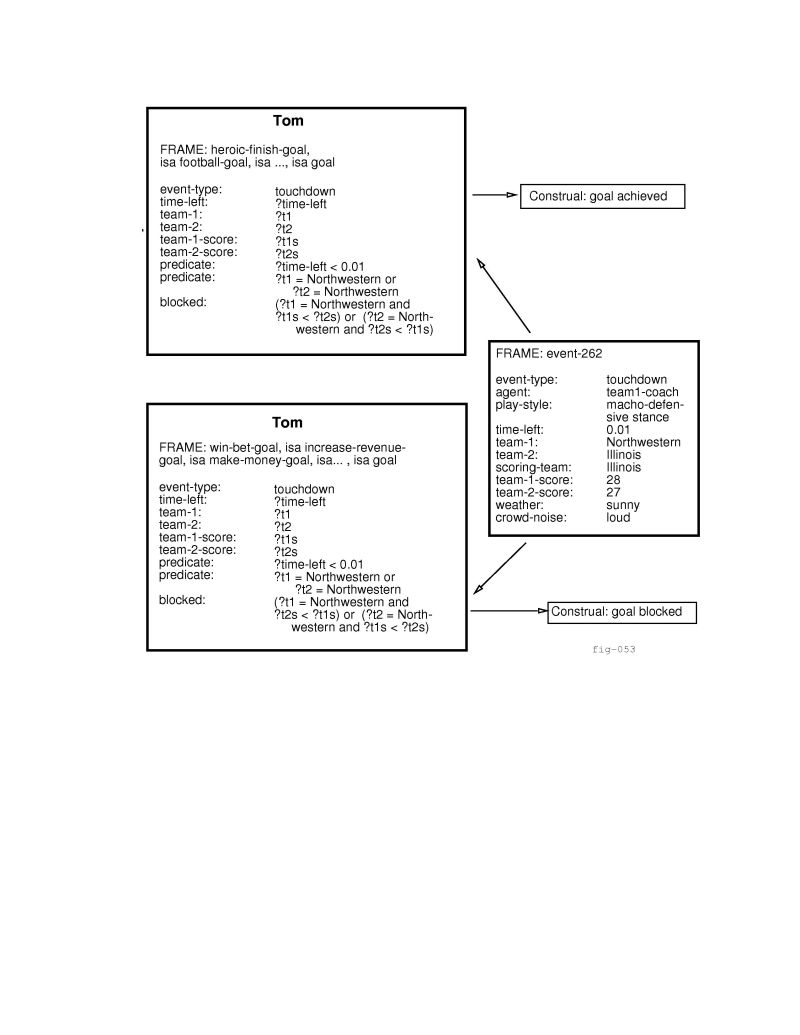

of emotions. In the current Affective Reasoner research questions are

raised about one's goals being blocked by the upholding of standards (i.e.,

``doing the right thing'' which has a bittersweet, heroic quality), and

having one's goals furthered by the violation of standards.

and

gives the example of a woman who felt foolish and castigated herself for

having had her purse stolen by a thief [8]. Ortony, et al.

identify the class of emotions that arise when one's fears have been

confirmed. They call these, appropriately, the fears-confirmed type

of emotions. In the current Affective Reasoner research questions are

raised about one's goals being blocked by the upholding of standards (i.e.,

``doing the right thing'' which has a bittersweet, heroic quality), and

having one's goals furthered by the violation of standards.

Related work has been done by Dyer on OpEd [9], which

understands newspaper editorial text. In the OpEd architecture, frames are

used to represent conceptual dependency structures [26]

which form belief networks about the world. Inferencing is guided by

knowledge of affect which is incorporated into the knowledge base. For

example, in trying to understand the meaning of a piece of text, the word

``disappointed'' causes demons to be spawned that resolve pronoun references

and search for blocked goals. This processing all falls into the category of

plausible reasoning within the object domain which, as previously discussed,

is beyond the scope of the Affective Reasoner's focus.

The THUNDER (THematic UNDerstading from Ethical Reasoning) program

of Reeves [21], is a story-understanding system that focuses on

evaluative judgment and ethical reasoning. Schema called Belief

Conflict Patterns are used to represent the different points of view in a

conflict situation. This work is related to that of the Affective Reasoner

in that the kind of moral reasoning needed to understand the stories that

THUNDER takes as input involves the same sorts of knowledge required to

generate the attribution and compound emotions. One of Reeves's

contributions is in the detailed working out of a set of moral patterns at

the level of stories. Reeves develops the idea that evaluators of

stories make many moral judgments about the characters in those stories

using these patterns, and that this moral framework is often necessary for

understanding text. For example, Reeves analyzes a story in which hunters

tie dynamite to a rabbit ``for fun''. The rabbit runs under their car for

cover and when the dynamite blows up it destroys their car. Reeves argues

that to understand the irony in this story the evaluator must know that

killing a rabbit with dynamite for entertainment is reprehensible. This

allows the judgment that the chance destruction of the car is an example of

morally satisfying retribution.

Clearly moral reasoning is closely related to the rise of emotions.

In both THUNDER and the Affective Reasoner, the focus is on the point of

view of individual agents within, or observing, a situation. Reeves writes:

``...using different value systems will produce different ethical

evaluations. For example, the actions of a high school student who killed

himself after failing a test would be judged ethically wrong by a Catholic,

but not by a Samurai...'' ([21], page 23). The Affective

Reasoner has no text understanding mechanism, but it could simulate

the suicide scene with various observers responding with different emotions,

dependent upon their (moral) principles.

One area that has not been focused upon in great depth is the action

generation component of emotion reasoning. Most action schemes in AI

research focus on the logically defined needs of the agent, (i.e., actions

as a function of planning for goals). Since emotions themselves are so

poorly understood and so seldom incorporated into experimental AI

systems, it is understandable that little work is being done that uses them

as motivations for action. One system that does function in this way,

however, is the ACRES program (Artificial Concern REalisation System)

developed by Frijda and Swagerman [12] in the Netherlands.

The main premise of their research is that emotions provide

a way of operating successfully in an uncertain environment (see also

[28]). They approach the design of their system from a

functional standpoint: if an agent is to be able to respond functionally

to its environment, what properties must the subsystem implementing

that functionality have? To this end, in contrast to the

Affective Reasoner's descriptive approach, the primary emphasis is

placed on behavior potentials and the search for reasons to

initiate them. To quote:

-

The major phenomena are: the existence of the feelings of pleasure

and pain, the importance of cognitive or appraisal variables, the

presence of innate, pre-programmed behaviors as well as of complex

constructed plans for achieving emotion goals, and the occurrence of

behavioral interruption, disturbance and impulse-like priority of emotional

goals [12], (page 235).

Their architecture is designed to meet these demands:

- The system properties underlying these phenomena are facilities

for relevance detection of events with regard to the multiple concerns,

availability of relevance signals that can be recognized by the action

system, and facilities for control precedence, or flexible goal priority

ordering and shift (page 235).

The Affective Reasoner also addresses some of these issues, although

the emphasis is different. The Frijda-Swagerman action architecture arises

from issues relative to processing needs, whereas ours arises from a

description of actions. Similarities can be found on a number of fronts.

Both systems incorporate the idea of the cyclic nature of emotion machinery:

a situation arises, it is evaluated, it is either ignored or a response is

initiated which is fed back to the modeled world. As with the

Affective Reasoner, ACRES performs no reasoning about derivative motives.

Instead, emotion triggers are directly wired into the architecture.

The Affective Reasoner and ACRES also both stress the importance of

concern realization as a way of filtering out situations that are

not of interest, and of interpreting those that are. Entities have multiple

concerns and limited resources. To solve this problem both programs focus on

matching as an efficient means of assessing emotion eliciting

situations. To quote Frijda and Swagerman, ``Concerns can be thought of

as embodied in internal representations against which actual conditions are

matched,'' [12] (page 237), which is exactly the conceptual

approach of the Affective Reasoner.

The two systems differ, however, in the way in which they use the

realized concerns. Of primary importance to ACRES (but only of incidental

importance to agents in the Affective Reasoner) is filtering out situations

irrelevant to resource conservation (as most situations are). In addition,

unlike the Affective Reasoner, ACRES stresses the correct interpretation of

situations with respect to urgency (as distinct from importance- see

[28]). To approximate this behavior ACRES uses a system of

interrupts.

There are a number of interesting issues that ACRES does not

address. One of these is the maintaining of expectations with respect to

future goals. The aging of expectations and the elicitation of associated

prospect-based emotions is important for systems representing emotional

behavior, as is the representation of the emotional status of other agents.

ACRES does not maintain internal models of other agents, nor does it

maintain a working memory for the storage of currently active relationships.

Without these features it is not possible to reason about the emotional

lives, and therefore motivations, of other agents with whom it must

interact. A simple test of such a system is to see how well it fares on

modeling a situation such as the following: Tom pities his friend Harry who is sad because his (Harry's) mother has had her garage

burn down. Humans have no trouble with this because not only do we have an

internal representation of our own concerns, but we are also apparently able

to use some similar mechanism for representing the concerns of others, and

thus ``imagine'' how they might feel with respect to some situation. In this

case Tom must not only model Harry, but he must also model

the concerns that Harry is modeling for his mother. (See

section 2.8.3.)

The Affective Reasoner, in contrast to ACRES, incorporates

representations of the concerns of others in its architecture (see section

2.8), and represents relationships between agents. These

representations are incorporated into the emotion-generation process when

initiating fortunes-of-others emotions (see section 2.3) and into

the process that helps agents identify new situations as instances of a

certain emotion type.

The System for Interpretation of Emotional Situations (SIES) of

Rollenhagen [24], and the AMAL system of O'Rorke

[16] share a number of features. They both have taken

on the burden of

having to do non-derivative reasoning about the situations that give rise to

emotions within the object domain. They both use retrospective reports about

emotion-inducing situations as the basis for the structural content analysis

that drives the reasoning systems. Lastly, they both are descriptive in

nature. SIES attempts to better define emotion terms by exploring their

similarities and differences through abstract representations. AMAL attempts

to explain situations as emotion episodes through the use of abductive

reasoning.

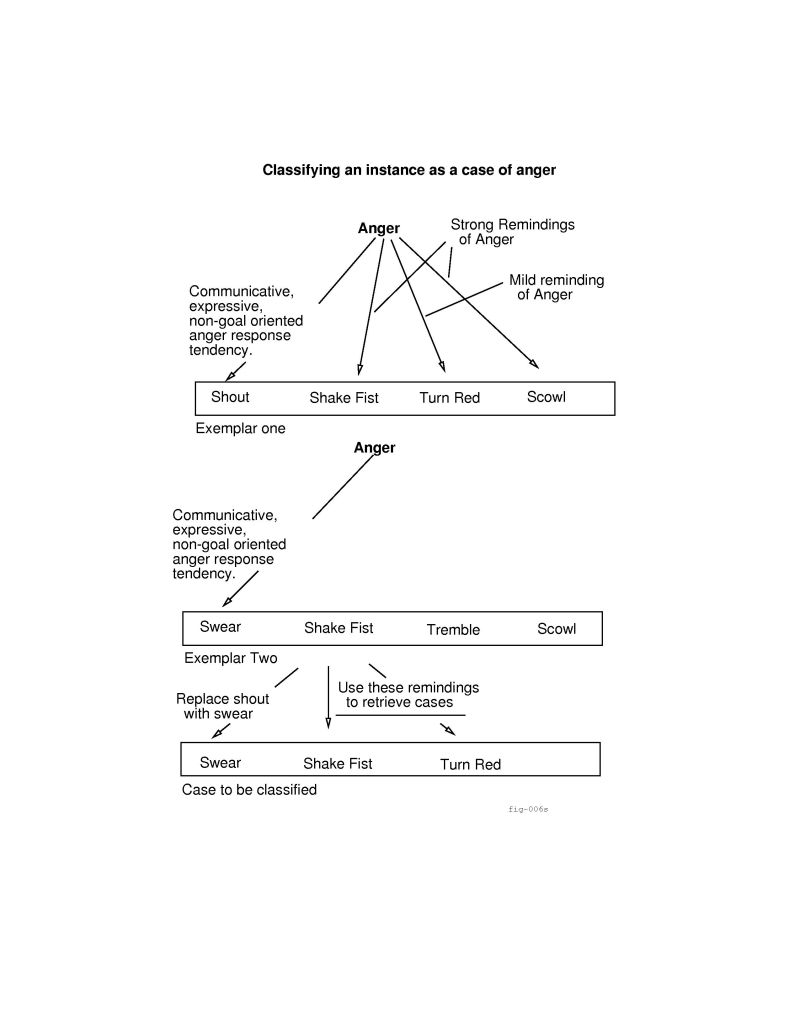

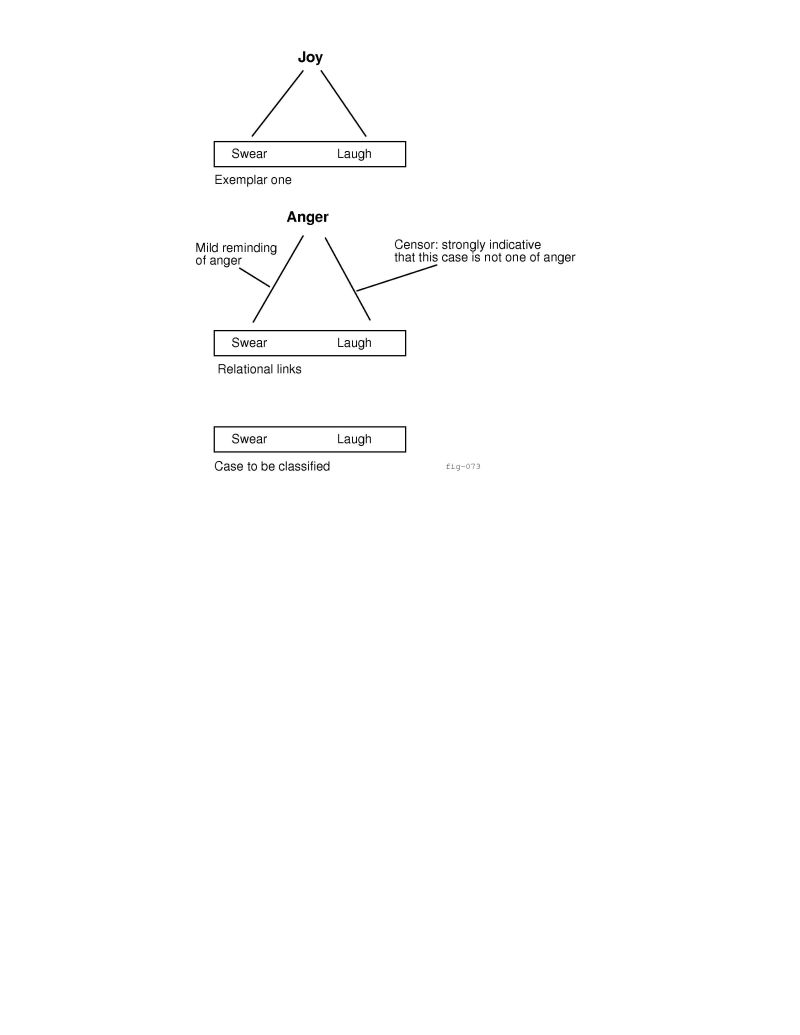

By contrast, the character of the Affective Reasoner, as a simulation, is quite different from these two programs. In the Affective

Reasoner the process of representing emotions contributes not only to

developing a language for describing emotion episodes but also to

the building of intelligent agents that ``have'' emotions. In addition,

while the Affective Reasoner uses a logic-based representation for reasoning

about the eliciting conditions for emotions, similar to both SIES and AMAL,

neither of these latter systems has anything like the case-based approach

used in the Affective Reasoner to reason backwards from the manifestations

of emotions to the emotions themselves.

The theoretical underpinnings of AMAL and SIES are quite different,

although in practice this difference is minimized. AMAL (based in part on

the work of Ortony, et al. [18]) is an extension of the cognitive appraisal approach where the antecedents of emotions are

described independently of the particular situations from which they derive.

In this approach it is situation types that give rise to emotions

(e.g., an undesirable event giving rise to distress), rather than relatively

specific situations (such as the death of a loved one). However, since AMAL

is designed to be able to represent such real-world emotion-eliciting

situations as those portrayed in student diaries [31],

it must also be able to do extensive reasoning within the object domain. To

represent these emotion anecdotes a situation calculus is used.

SIES, on the other hand, is an attempt to combine the cognitive approach

with a situational approach in which the antecedents of emotions are

seen as arising in certain real-world situations (e.g., loud noises leading

to fear, or separation from a loved one through death leading to sadness).

In his description of SIES, Rollenhagen gives many rules for emotion

reasoning, but most of these are employed in describing an instance of a

given emotion eliciting condition as embodied in a narrative description -

that is, in giving structure to the derivative motivations of the agents.

SIES should be understood as a system for analyzing emotion

episodes. In this regard, much of the contribution of the system is in

structuring the representation of the data. The program does not map

situation features into specific eliciting conditions. Instead, inferences

tying abstract situations into the personal concerns of the subjects are

made at the time the researcher encodes each emotion eliciting situation.

The representation of emotion eliciting situations in the Affective

Reasoner is from a different perspective, and is structured around the idea

that agents may be seen as carrying internal schemas that match

situations that arise in their world. Here the focus is on frames that

contain enough information to discriminate one situation from another

through matching. Varied interpretations of these situations,

produced by unifying an interpretation frame with a situation frame during

the course of the simulation, are seen as whole units. These interpretation

units are configured in various ways such that they give rise to the various

emotions.

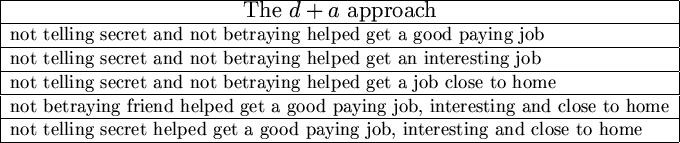

Unlike SIES and the Affective Reasoner, AMAL [16] does do

extensive, automated, plausible reasoning about derivative motivators. To

do this it uses an abduction engine. AMAL uses a logic-based situation

calculus framework to describe actual emotion

episodes[31]. Using abductive reasoning, AMAL is able to

construct plausible explanations for emotion instances. For example AMAL

analyzes plausible relationships in the following story:

One other important difference among the three systems should be

noted. AMAL and SIES typify the approach that takes the logical structure

of the preconditions for emotions to be simply an extension of the structure

of the world (e.g., shouting causes Tom to startle, which causes him to drop

a hammer on his foot, which causes him to feel pain, which causes his friend

to feel sorry for him). Our approach is fundamentally different in that

there is a definite demarcation between the internal strong-theory rules

used to generate emotions for agents, and the object-domain rules used to

map into them. In this approach, since we see emotion eliciting situations

in the object domain as instances that may or may not match internal schemas

representing the concerns of the agents, processing and data-entry tasks are

very different in nature. While each approach can, of course, be reduced to

a logic representation, the two languages for content theory representation

are entirely different, and thus, in practice, so are the systems. In AMAL

and SIES everything is represented as rules, in the Affective Reasoner the

concerns of the users are represented as collections of features.

Toda's work on Solitary Fungus-Eaters [30] attempts

to describe emotions as a situated functional component of society. In this

case, Toda's society is that of simple (fantasy) uranium-mining robots that

operate autonomously on a distant planet and eat native fungus to survive.

The goal of these robots is to collect as much uranium as possible. If they

consistently choose only to travel to locations containing uranium, however,

they may not encounter enough fungus to keep themselves going and in such a

case would ``die''. Society arises out of a need for a ``coalitional

bond'' to help protect the robots against stronger predators, and so forth.

Toda's structural analysis of the human emotion system is based on

his stated belief that emotion was a useful mechanism for a very different,

primitive, society than our current one, and that just as our bodies have

problems dealing with modern-day environmental stresses such as pollution,

so does our emotional system have difficulties with such an (evolutionarily)

unfamiliar environment. To illustrate his ideas about why an emotion system

arose in the first place, giving insight into its nature, Toda details a

system of urges necessary to solve coordination problems in his

simple robot society.

For example, in response to the threat of a larger predator, a

Fungus-eater may have a Fear Urge which causes him to send out a

distress signal. This in turn triggers a Rescue Urge in other robots

who then come to jointly attack the predator. Since a reward is necessary

for this scenario to fit into the ``individual-gain'' paradigm of Toda's

robot society, a Gratitude Urge is developed. Rewards, given out of

gratitude by the rescued robot, may have to be postponed, however, if the

rescued robot is short on resources. Since this delay may cause problems, a

more immediate reward in the form of a Love Urge is developed, and

so forth.

This major difference between this work and that done on the

Affective Reasoner is of course that the latter is actually implemented as a

running program. While Toda has given some level of detail for many aspects

of his system (including vision and planning, not discussed here), there are

nonetheless many gray areas in his description. Vision and planning aside,

such social actions as defecting from one's coalition partner and joining a

more profitable coalition are treated as primitives in the discussion. To

implement even a small number of these primitives of the robot society would

be a major task. In addition Toda's urges, intended to be thought of

tentatively as simple emotions, are presented anecdotally and not as part of

a cohesive, complete theory.

Toda's theoretical perspective shares with both the Affective

Reasoner and ACRES the idea of emotions as mediators for controlled,

immediate responses to situations (see also [27]). To this

end, emotions take a functional role in the automated organism.

From this short survey it should be apparent that comparatively

little work has been done in this area, and that what has

been done is often so different from what has come before that comparisons

are strained. PARRY is a system that attempts to model a diseased

and limited mind. BORIS, OpEd and THUNDER use a content theory of emotions

to aid in the understanding of text. ACRES attempts to model the dynamic

utility of emotions. SIES provides a structure for mapping from the features

of situations into the emotions. AMAL uses abductive reasoning, well suited

to the understanding of emotion episodes, to explain emotion situations.

Clearly these are diverse approaches only

loosely tied together by the generic categorization of the work as ``emotion

research''. One theme that all of these treatments share however,

is that working out theories at the level of detail required for a running

computer program forces the theorist to refine and completely specify all of

the hidden ``gray areas'' of the theory. This is particularly important for

this complex, and little understood subject.

1.4 TaxiWorld

The Affective Reasoner has three primary components: (1) the

affective reasoning component, which is independent of any domain, (2) a

world simulation based on an object-domain theory, and (3) a graphics

interface based on the simulated world. Once an object-domain theory has

been incorporated into the Affective Reasoner, and a graphics interface

customized for that domain, it becomes a domain-specific system. In its

current form the Affective Reasoner primarily manipulates agents that

are intended to be interpreted as operating in a schematic representation

of the Chicago area (figure 1.1). We call this TaxiWorld.

In fact, other partially analogous domains can be simulated if the user

simply reinterprets the meaning of the icons displayed. This how the

football game example discussed in chapter 3 was run.

TaxiWorld has approximately forty-five different sets of simulation

events which produce emotion eliciting situations. Included are events that

represent traffic accidents, rush hour delays, getting speeding tickets,

picking up passengers, getting paid, having to wait a long time for a taxi,

and so forth. In addition, there are approximately forty different

rudimentary ``personalities'' which may be given to each agent that

participates in one of these situations. For example, one taxi driver may

get angry when he is given a small tip whereas another may just figure it is

part of the job. Or, both may get angry but one will be rude to his

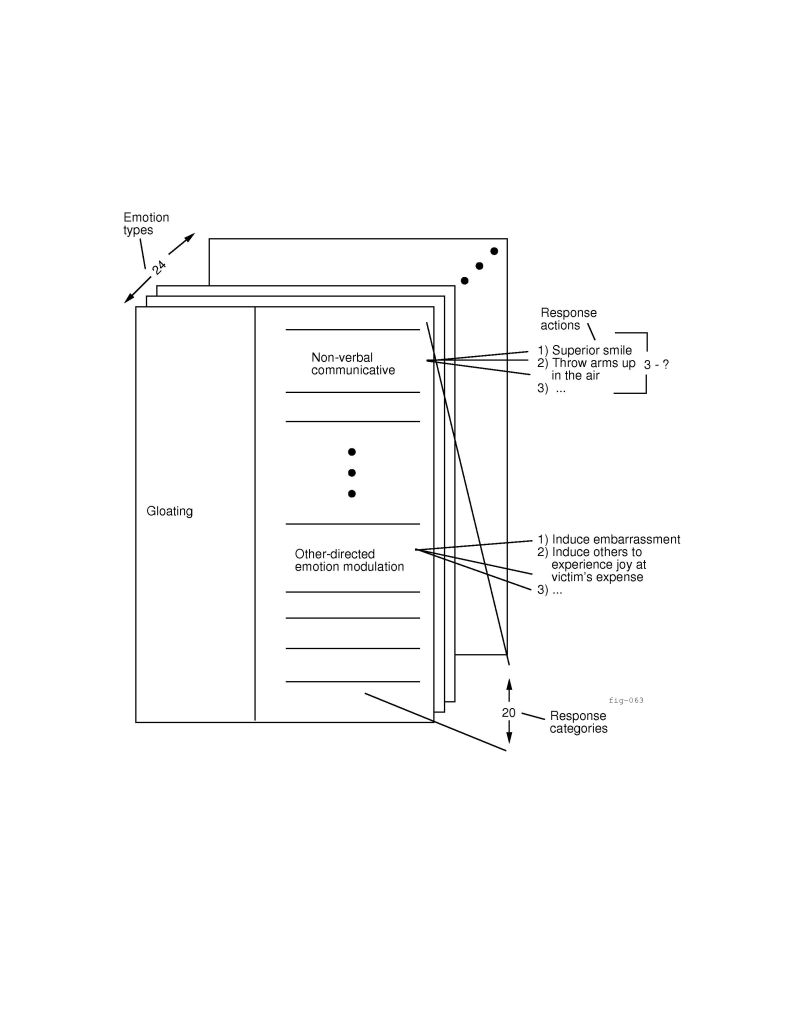

passenger, whereas another may just smile and pretend that he does not care.

There are roughly 150 different candidate interpretations of the forty-five

situations. Different interpretations, or sets of interpretations,

can give rise to one or more of twenty-four emotion types, each of which

has about sixty possible action responses associated with it. Used in

different combinations these components can yield tens of thousands of

different emotion episodes.

In any research of this kind the question naturally arises as to

what has actually been implemented and what has been run. In this

dissertation only a few emotion episodes will be discussed in detail so as

to allow us to focus on emotion representation issues. We provide at most

one emotion episode example for each point. However, it should be understood

that many different examples may actually have been run in the process of

addressing any one particular problem. In addition, unless otherwise stated,

illustrative examples in this dissertation are based on code that has been

written and actual simulated episodes.

The researcher using TaxiWorld has two forms of input into the

system. First he or she statically configures the object domain (i.e., the

world of taxi drivers and their passengers) through LISP files. Second, he

or she dynamically manipulates the running simulation through menus and

windows. Configuring the object domain has six parts: (1) Should the

researcher wish to extend the number of situations represented then new

simulation events must be entered into a LISP file, and event handlers

written to process them. (2) Once the object domain (e.g., the world of taxi

drivers) is stable, the researcher may choose to add new interpretations of

the situations that arise in it by creating construal frames (see

chapter 2) with which agents interpret situations. (3)

New emotion manifestations may be created within the object domain. For

example, in the world of taxi drivers we may wish to represent ``cutting

someone off'' as an expression of anger. (4) New personalities may be

created by grouping (old and/or new) construal frames into sets, and by

grouping temperament traits (i.e., tendencies toward certain types

of actions as expressions of emotion) into new sets. (5) Simulation

sets may be constructed by specifying which of the represented situations

are to arise, which types agents are going to be present, and which

personalities those agents will have. Lastly, (6) a content theory for

reasoning about observed actions from cases may be recorded in a data file

or through interaction with the running system. User input macros have been

provided for (2) - (6).

Figure 1.1 shows a portion of TaxiWorld's map display.

Interaction with TaxiWorld is through this interface. Except for stop and go each of the buttons on the control panel leads to a

pop-up menu for manipulating different aspects of the system. This control

mechanism allows the following functions, by button: (1) stop and

go: start the simulation running or pause it, (2) demos:

select a configuration of agents and situations for the simulation, (3) control: change the speed of the simulation, or enable and disable

different features in the simulation such as whether or not agents

negotiate and what types of traffic delays may arise, (4) set: set

rudimentary global moods for the agents, and set the mode for the heuristic

classification system (i.e., set the case-based reasoning system in learn mode, in report-only mode, or off), and (5) queries: look at a scrolling window containing summaries of the

situations that have arisen so far.

Figure 1.1:

The TaxiWorld display.

|

|

Once the user has configured and started the simulation, the

animated icons move around the screen as agents who ``experience'' the

situations in the simulated world. The figure shows a simulation that has

been running for a short time. Four taxi drivers, Tom, Dick, Harry and Sam

are shown. Tom and Sam are traveling between Chicago's O'Hare Airport and

the Loop, Dick is between the ``Junction'' and the Loop, and Harry is on his

way to the Loop from the Museum of Science and Industry (not pictured). One

passenger is waiting for a ride at Northwestern University, and five

passengers are queued up waiting for rides from the Chicago Botanical

Gardens. During rush hours the roads swell with traffic and the agents move

more slowly. This occurs also with accidents. Highway patrol agents may

also be displayed.

Selecting an agent with the mouse provides information about that

agent. One option will bring up a scrolling window containing the current

``physical'' state of the agent: where he is, whether he has someone in the

cab (if he is a taxi driver agent), how much gas he has left, where he is

going, etc. Another option brings up an emotion-history scrolling window.

Figure 1.2 shows one of these, in this case for Harry. Here we

see summaries of two emotion instances, distress and dislike. These have arisen from two different construals of the same

situation. Briefly, a new passenger has gotten into Harry's cab and was

cheerful. Harry, whose ``personality'' type might be roughly described as

depressed grouch has a goal and a preference that apply in such a

case. First, he has no desire to be around happy people because they remind

him of how unhappy he himself is. This leads to distress. Second, he just does

not like happy people, he finds them distasteful, so he dislikes this

passenger.

Figure 1.2:

The emotion history scrolling window.

|

|

For maximum flexibility, the researcher has the option of

communicating with the running system directly through LISP. Basic tools

have been provided for this, in particular to control the asynchronous

processes spawned in the simulation. The user can also interact with the

case-based reasoner through LISP as well.

TaxiWorld is written in Common LISP and runs on a SUN SPARC

station 1 with 40MB of RAM. It uses a simple SOLO graphics

interface. The TaxiWorld code is about fifteen thousand lines in length,

and is combined with an additional fifteen thousand lines of slightly

modified code based on Dvorak's Common LISP version of Protos

[7].

1.5 Two examples from TaxiWorld

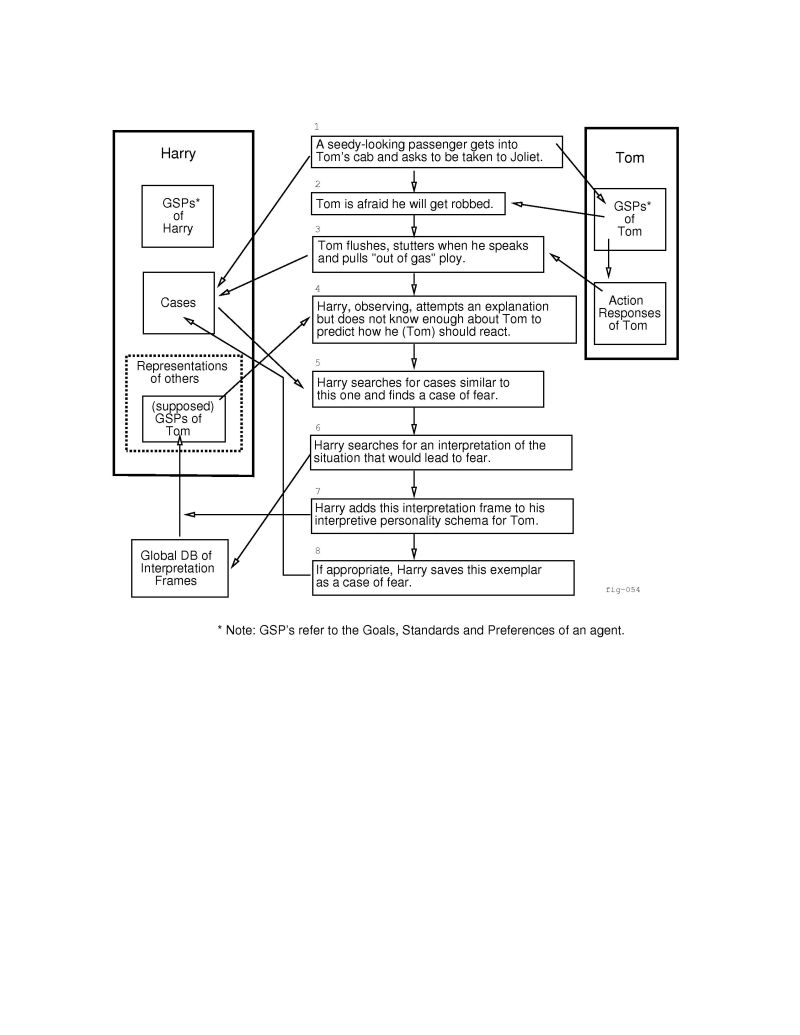

Figure 1.3:

Harry learns about Tom's fear.

|

In this section we look at two examples of the kinds of episodes

that occur in TaxiWorld. Figure 1.3 represents a simple episode

where a taxi driver, Harry, observes another taxi driver, Tom, and learns

something about his fears. This episode makes use of three agents (the third

is Tom's passenger - not pictured), one situation (that arises in the

Chicago area) and a single affective state, in a TaxiWorld

simulation. In this episode we see the following: (1) Tom picks up a

passenger headed for Joliet at the Museum of Science and Industry. (2) The

passenger is seedy-looking, causing Tom to fear that he will be robbed. (3)

This fear is expressed as a flushed expression on Tom's face, a stutter in

his voice, and the statement that he does not have enough gas to drive to

Joliet. (4) Another taxi driver, Harry, watches this episode. He does not

know that Tom is inclined to be afraid of seedy-looking passengers whose

destinations are Joliet. He has no representation of how Tom might construe

such an event. However, (5) Harry has seen a case where another agent

had a flushed face and a stutter in his voice. The previous case was known

to be an example of fear. (6) Harry now reasons to come up with a

good explanation: if Tom is experiencing fear, why might this be so? He

``imagines'' some possible interpretations of this event that might cause

someone to be afraid and reasons that if Tom had the goal of retaining his

money and/or maintaining his personal safety, then given that seedy-looking

people have been known to rob cab drivers - thus taking away that money

and perhaps causing harm, Tom might experience fear. Since this explanation

fits, Harry drops his search. (7) He updates his internal representation of

Tom as someone for whom retaining money is important, and who is afraid of

unsavory passengers. (8) Harry also now has a new case which he may wish to

save. The ``I don't have enough gas'' ploy, which was not a feature of the

previous case of fear, may be explained to him as a problem-reduction strategy, or it may be left unexplained but associated,

depending on whether the system was being trained, or was running in

automatic learning mode.

Harry now believes he has learned something new about Tom. If this

knowledge is correct he will be better able to explain Tom's future actions

in response to such a situation without resorting to a case search (i.e., he

knows how Tom will interpret the situation). In addition he will be

able to predict how Tom might react to such an event, even in the

absence of any empirical evidence. On the other hand, if at a later time the

knowledge proves to be erroneous it will be discarded and a search for an

alternate explanation will be initiated.

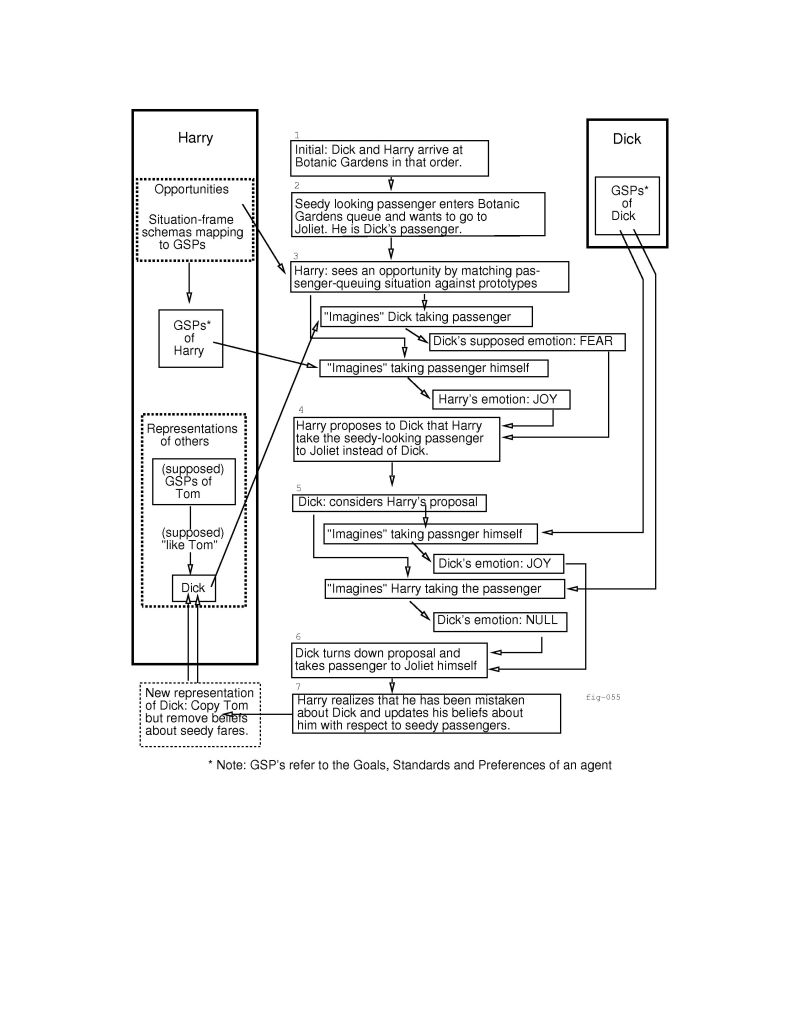

Figure 1.4:

Negotiating about who takes the seedy-looking passenger to Joliet.

|

The episode pictured in Figure 1.4 expands on the previous

example. Here we see that some time later Harry has come to know Tom quite

well.![[*]](footnote.png) He has met a new agent, Dick, who seems to respond to events the way Tom

does, although he (Harry) has never seen Dick pick up a seedy-looking

passenger headed for Joliet. Until he knows differently, Harry makes the

assumption that Dick is just like Tom (i.e., with respect to the way he

interprets situations). At one point, Harry (who has the goal of making

money and likes to go to Joliet because, as a long trip, this furthers his

goal), sees an opportunity. Here is the sequence of events: (1) Dick and

Harry arrive at the Botanic Gardens in that order, giving Dick the right to

the first passenger. (2) A seedy-looking passenger arrives at the cab stand

and wants to go to Joliet. (3) Harry matches the passenger-arrival

situation against an opportunity prototype.

He has met a new agent, Dick, who seems to respond to events the way Tom

does, although he (Harry) has never seen Dick pick up a seedy-looking

passenger headed for Joliet. Until he knows differently, Harry makes the

assumption that Dick is just like Tom (i.e., with respect to the way he

interprets situations). At one point, Harry (who has the goal of making

money and likes to go to Joliet because, as a long trip, this furthers his

goal), sees an opportunity. Here is the sequence of events: (1) Dick and

Harry arrive at the Botanic Gardens in that order, giving Dick the right to

the first passenger. (2) A seedy-looking passenger arrives at the cab stand

and wants to go to Joliet. (3) Harry matches the passenger-arrival

situation against an opportunity prototype.![[*]](footnote.png) He sees an opportunity to make a request of Dick, but ``thinks it through''

first to see if it is feasible. Using his representation of how Tom sees

the world as a default for Dick, Harry believes that Dick, like Tom, has

fear about going to Joliet with the seedy-looking passenger. On the other hand

he believes that he will, himself, be happy about going on such a trip. (4)

Harry proposes to Dick that Harry, instead, take the fare to Joliet. (5)

For his part, Dick, considering this proposal, ``imagines'' that Harry gets

to take the passenger. He has no emotional response to this. Next he

``imagines'' taking the passenger himself. As it happens, Harry's schema

for Dick is incorrect: while Dick is indeed very much like Tom in many ways,

he is unlike him in that he is not afraid of seedy passengers who want to go

to Joliet. Consequently Dick does not see a threat to his goals, but rather

only that if he does make the trip to Joliet he will make some

money. In other words, he reasons that if he makes the trip he will be

happy, and that if he does not make the trip he will simply experience a

lack of emotion. (6) He therefore does not agree to Harry's proposal and

instead takes the passenger himself. (7) Harry believes he has learned

something new about Dick. Apparently Dick does not construe seedy

passengers going to Joliet as threatening. Harry splits off his internal

representation for the goals, standards and preferences of Dick from the

representation of those for Tom and removes the offending interpretation

frame from the latter.

He sees an opportunity to make a request of Dick, but ``thinks it through''

first to see if it is feasible. Using his representation of how Tom sees

the world as a default for Dick, Harry believes that Dick, like Tom, has

fear about going to Joliet with the seedy-looking passenger. On the other hand

he believes that he will, himself, be happy about going on such a trip. (4)

Harry proposes to Dick that Harry, instead, take the fare to Joliet. (5)

For his part, Dick, considering this proposal, ``imagines'' that Harry gets

to take the passenger. He has no emotional response to this. Next he

``imagines'' taking the passenger himself. As it happens, Harry's schema

for Dick is incorrect: while Dick is indeed very much like Tom in many ways,

he is unlike him in that he is not afraid of seedy passengers who want to go

to Joliet. Consequently Dick does not see a threat to his goals, but rather

only that if he does make the trip to Joliet he will make some

money. In other words, he reasons that if he makes the trip he will be

happy, and that if he does not make the trip he will simply experience a

lack of emotion. (6) He therefore does not agree to Harry's proposal and

instead takes the passenger himself. (7) Harry believes he has learned

something new about Dick. Apparently Dick does not construe seedy

passengers going to Joliet as threatening. Harry splits off his internal

representation for the goals, standards and preferences of Dick from the

representation of those for Tom and removes the offending interpretation

frame from the latter.

In this example we see that Tom and Dick both have distinct

emotional lives, and that Harry is able to reason about them. Tom has a goal of making (keeping) money and is able to experience fear as a

result of believing that this goal may be threatened. Similarly, Dick also

has a goal of making money and is capable of feeling hope over

the prospect of taking a passenger on a long trip.

On the other hand, Harry, who is observing Tom and Dick, draws on

his experience, and his internal schemas for the other agents, to explain

their actions. In the first case, he has no clue as to how Tom might

interpret the situation. He looks through some cases to see if he might make

a guess as to what stuttering, flushing and the out-of-gas ploy

might indicate. He has seen something similar when someone else was afraid,

and he can explain the fear as a fear of getting robbed. Since this

explanation of the situation is workable he assumes that this is part of how

Tom interprets the world, and he now adds this to his representation of

Tom. Until he has cause to do differently he will now always make this

assumption about Tom.

In the future, Harry will test this knowledge whenever a similar

situation arises by asking this question, Given a similar emotion

eliciting situation (seedy passenger going to Joliet), does the observed

agent respond in a manner compatible with an emotion to which the assumed

interpretation leads? If the agent does, then Harry will be more

confident that his assumed interpretation is correct; if he does not, then

Harry will have to search for another explanation (i.e., one which leads to

a different emotion).

In addition, Harry's representation of Tom is one he can draw on as

a default personality type, for reasoning about a new agent, Dick. As

long as Dick's actions can be explained in terms of Tom's personality, the

default personality suffices and he uses it to ``see the world through

Dick's eyes''. When an opportunity arises he believes that it is worth

pursuing because he is able to ``imagine'' how Dick will perceive his offer.

However, when Dick turns him down, Harry reasons that he has made an

incorrect assumption about Dick and removes this supposition from his

representation of how Dick sees the world. But this means that Dick no

longer looks just like Tom, so Harry must split the two schemas apart. The

old one still represents Tom, and the new one now represents Dick.

The rudimentary negotiation, the simple opportunity recognition, and

the rudimentary default personality reasoning, all open research questions

in their own right, are not what is important in these examples. What is of

interest is the idea that there are units of appraisal, here represented as

frames, which can be used as filters for the interpretation of situations,

and that these appraisal units can be mixed and matched as necessary to

construct rudimentary personalities for agents (i.e., to give them primitive

affective life). Of additional interest is the idea that these appraisal

units can also be used by an observing agent to construct an internal

representation of the observed agents for generating explanations of

their actions, and for predicting their emotional responses to future

similar situations. Lastly, it is important to consider that agents

manifest their emotions in ways that can be understood by observers: agents

often communicate their emotions through their actions, or their lack

thereof.

In the following chapters we attempt to illustrate some concerns

that have to be addressed by emotion reasoning systems, how our

representation addresses these concerns, and how it can be used to model a

number of different multi-agent interactions.

2 Overview

In this chapter we provide an overview of the Affective Reasoner.

The discussion focuses on the functional role of the various modules in the

system. Two of these modules (construal, and action-generation), are quite

elaborate, having important theoretical content, and so are only introduced

here since they are discussed more fully in later chapters. The other

modules are less elaborate, and are treated fully in this overview.

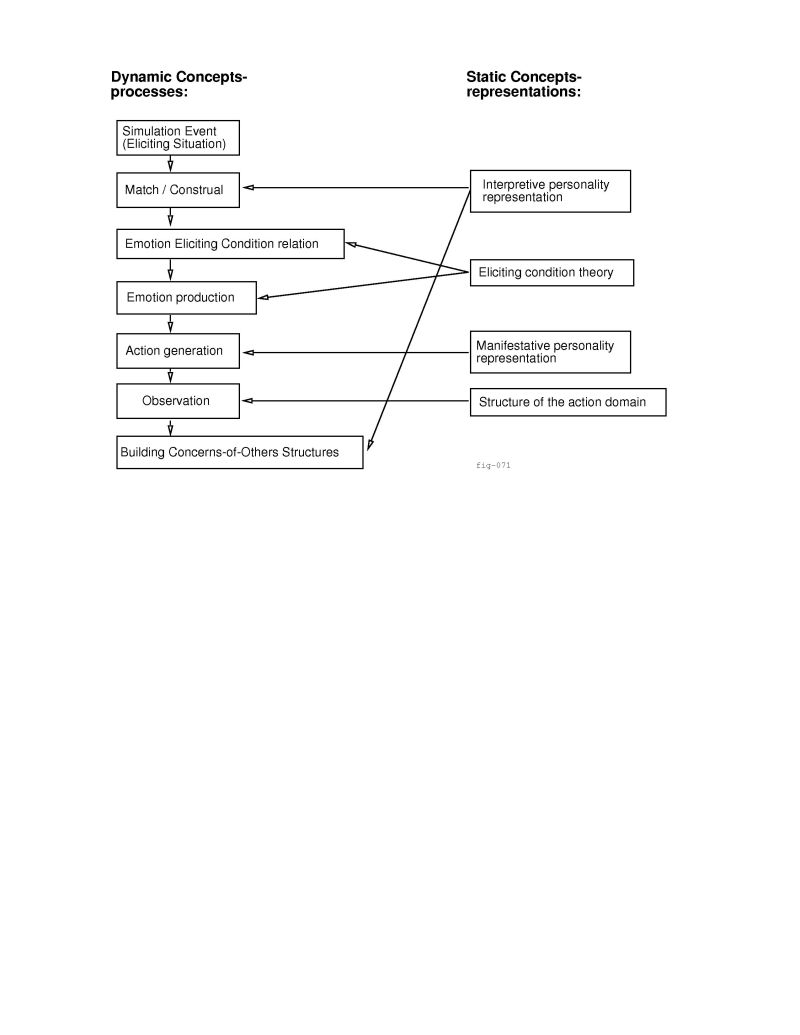

The sections are organized around the various stages the system goes

through in processing a simulated situation. They are introduced in the

order of the different processing stages. These stages, and the static

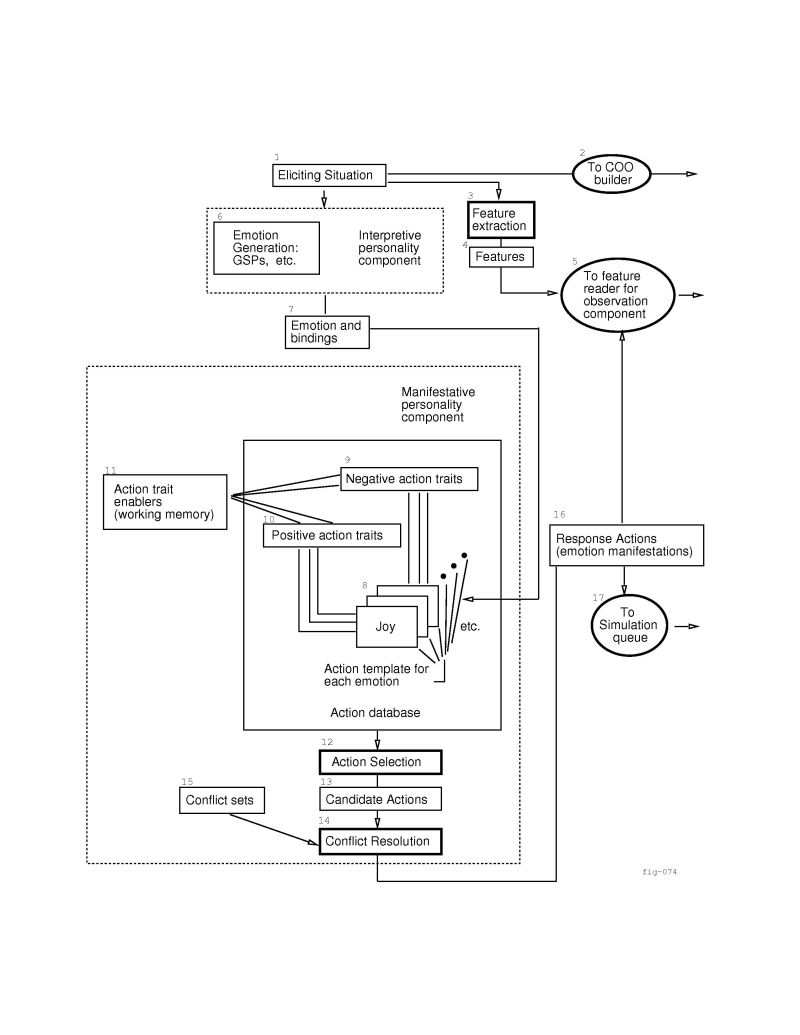

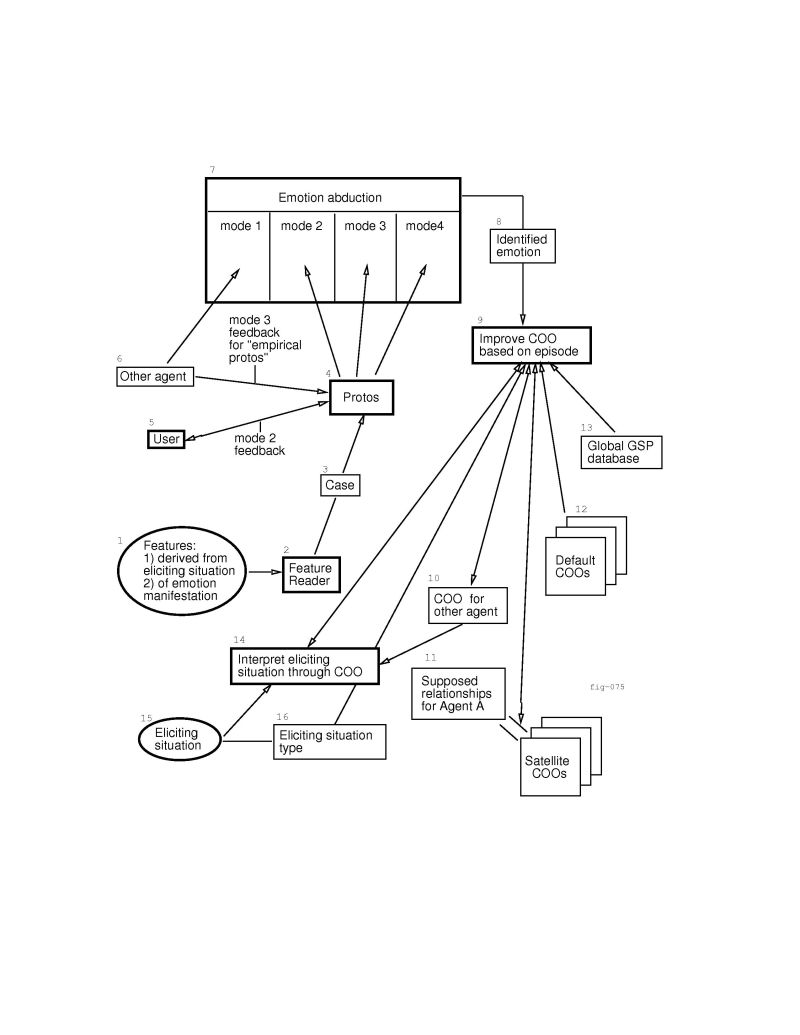

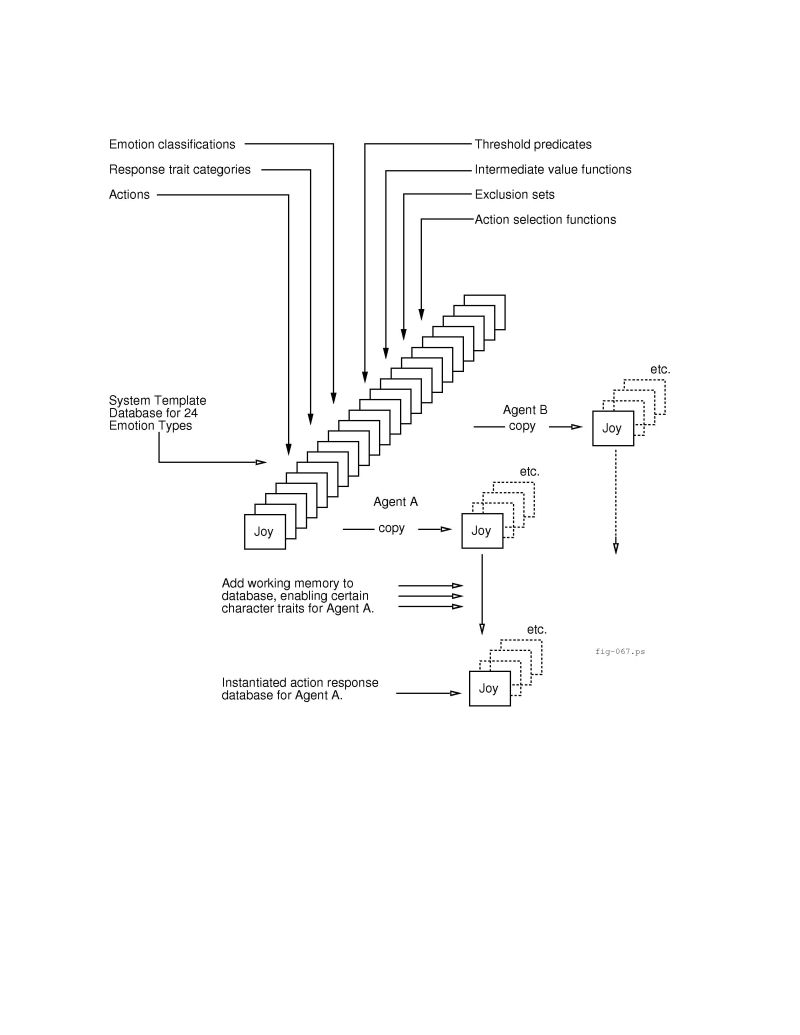

representations which they use, are illustrated in figure 2.1.

Processing flows from some initiating simulation event through emotion and

action generation to the final stages simulating observation by other

agents. Roughly speaking, this may be interpreted as follows: Something

happens in the modeled ``world'',

creating a situation. The agents that populate this world interpret the

situation in terms of their individual concerns. Each interpretation is

reduced to a nine-attribute relation, and some variable bindings. Specific

configurations of the relation lead to the production of specific emotional

states in the agents (i.e., their emotional responses to the situation).

These emotional states, in turn, are manifested as action responses. Agents

can observe each other's responses to situations and attempt to explain

them in terms of emotions the other agent may have been

experiencing. An observing agent then finds an explanation for that emotion

based on varied interpretations of the original situation. Once this is

done, the observer places the schema that lead to the successful

interpretation (i.e., the interpretation that lead to what was believed to

be the other agent's emotion) into a database representing the concerns of

the other agent. This Concerns-of-Other database may then be used to predict

and explain the other agent's responses to future similar situations.

Figure 2.1:

Processing stages and related representations

|

2.1 Fundamental concepts

This research is at the juncture of Artificial Intelligence and

Cognitive Science. Its interdisciplinary nature can lead to confusion over

the use of terms, such as personality. Accordingly we will, in this

section, make explicit our intended meanings. In addition, we also discuss

some central concepts, such as the basic emotion eliciting condition theory

from

[18] on which the construal process is based.

Rudimentary personalities. To computer scientists personality means something like an aggregate of qualities that

distinguish one as a person. (Webster). To psychologists however, personality means something rather different, something like co-occurring classes of trans-situational stable traits.

In the Affective Reasoner, the agents in the system have rudimentary

``personalities'' (at least computer scientists might consider this to be

so) that distinguish them from one another. These rudimentary personalities

are divided into two parts: the disposition agents have for interpreting

situations in their world with respect to their concerns, and the

temperament that leads them to express their emotions in certain ways. We

have chosen the terms interpretive personality component and manifestative personality component to denote these two parts of an agent's

unique makeup. By the former we mean the rudimentary

``personality'' which gives agents individuality with respect to their

interpretations of situations (i.e., their uniquely individual concerns).

By the latter we mean the rudimentary ``personality'' which

gives agents individuality with respect to the way they express or manifest

their emotions.

Situations that lead to emotions. In the Affective Reasoner,

some simulation events create situations that can initiate emotion

processing on the part of the agents involved. These we call emotion

eliciting situations or simply, eliciting situations. An example of

such an eliciting situation is the conceptual ``arrival'' of some

agent at a location, which might give rise to an emotion such as relief or

distress. Eliciting situations are not to be confused with eliciting conditions which derive from the emotion eliciting condition

theory of [18] and are discussed below.

Goals. This term has a very broad meaning. Here we use it in

its simplest sense: a state of affairs that an agent desires to have come

about. In this dissertation, unless otherwise specified, most goals will be

considered to be equivalent in structure.![[*]](footnote.png) Clearly this is not actually the case. Some goals are preservation goals,

some are never satisfied (i.e., living a good life), some can be partially

achieved by achieving some other goal (i.e., saving another $100 towards a

house), and so forth. In general, however, these distinctions have more to

do with goal generation, interaction and retirement than they do with the

way goals fit into our underlying cognitive theory. Consequently, for most

of the discussion it will suffice to define the term goal to mean

simply, a desired state of affairs that, should it obtain, would be

assessed as somehow beneficial to the agent.

Clearly this is not actually the case. Some goals are preservation goals,

some are never satisfied (i.e., living a good life), some can be partially

achieved by achieving some other goal (i.e., saving another $100 towards a

house), and so forth. In general, however, these distinctions have more to

do with goal generation, interaction and retirement than they do with the

way goals fit into our underlying cognitive theory. Consequently, for most

of the discussion it will suffice to define the term goal to mean

simply, a desired state of affairs that, should it obtain, would be

assessed as somehow beneficial to the agent.

Object domain. Emotion reasoning can be performed as well by

one person as by another. Furthermore, people can have emotions about almost

anything and in almost any circumstance. People have emotions about

something important like the state of their finances, but they might also

have them about something as apparently inconsequential as the state of

their shoelaces. For this reason emotions may be considered an abstract domain that operates within object domains. The language of the

emotion domain is abstract: goals, standards, preferences, and so forth. The

language of an object domain is specific: money, shoelaces, etc. The

Affective Reasoner can be used to do abstract emotion reasoning in any

object domain that can be modeled. One such object domain is the world of

taxi drivers, as represented by the TaxiWorld version of the Affective

Reasoner. By object domain then we mean, that domain in which

the eliciting situations for emotions are described, and in which

emotion-based actions are manifested.

The emotion eliciting condition theory. The emotion eliciting

condition rules we use for the strong-theory reasoning component of the

Affective Reasoner are based on the work of [18]. Ortony, et al.

specify twenty-two emotion types based on valenced reactions to

situations construed either as being either goal-relevant events,

acts of accountable agents, or attractive and unattractive objects. The extended and adapted twenty-four emotion-type

version of the emotion eliciting condition theory that we have used in the

Affective Reasoner is outlined in table 2.1, based on

the work of [16]. Each of these twenty-four emotion states has a

set of eliciting conditions. When the eliciting conditions are met, and

various thresholds have been crossed, corresponding emotions result. A key

element of the theory is that the way emotion eliciting situations map into

these eliciting conditions depends on the interpretations of the

individual agent.

basket at the buzzer, and your team loses. I may experience joy at the

event, whereas you may experience distress. In both cases we share the

same sets of eliciting conditions for our emotions and the emotion eliciting

situation is the same (i.e., the ball went in the basket just before the

buzzer); it is only the interpretation or construal of the situation

which is different.

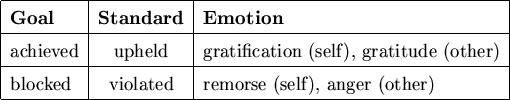

EMOTION CATEGORIES (TYPES)

Clark Elliott, 1998

after Ortony, et al., 1988

Table 2.1

|

GROUP

|

SPECIFICATION

|

CATEGORY LABEL AND EMOTION TYPE

|

| Well-Being |

appraisal of a situation as an event |

joy: pleased about an event

distress: displeased about an event |

| Fortunes-of-Others |

presumed value of a situation as an

event affecting another |

happy-for: pleased about an event desirable for another

gloating: pleased about an event undesirable for another

resentment: displeased about an event desirable for another

jealousy*: resentment over a desired mutually exclusive

goal

envy*: resentment over a desired non-exclusive goal

sorry-for: displeased about an event undesirable for

another |

| Prospect-based |

appraisal of a situation as a prospective

event |

hope: pleased about a prospective desirable event

fear: displeased about a prospective undesirable event |

| Confirmation |

appraisal of a situation as confirming

or disconfirming an expectation |

satisfaction: pleased about a confirmed desirable event

relief: pleased about a disconfirmed undesirable event

fears-confirmed: displeased about a confirmed undesirable event

disappointment: displeased about a disconfirmed desirable

event |

| Attribution |

appraisal of a situation as an accountable

act of some agent |

pride: approving of one's own act

admiration: approving of another's act

shame: disapproving of one's own act

reproach: disapproving of another's act |

| Attraction |

appraisal of a situation as containing

an attractive or unattractive object |

liking: finding an object appealing

disliking: finding an object unappealing |

Well-being/

Attribution |

compound emotions |

gratitude: admiration+joy

anger: reproach+distress

gratification: pride+joy

remorse: shame+distress |

Attraction/

Attribution |

compound emotion extensions |

love:admiration+liking

hate:reproach+disliking |

*Non-symmetric additions necessary for some stories.

The emotion types are simply categorizations of selected patterns of

emotion eliciting conditions. They have been given English names roughly

corresponding to an intensity-neutral label for the type of

emotions represented by the specific configurations of the emotion

eliciting conditions. It is important to note that these names (e.g., joy and anger) given to the emotion types are not to be

mistaken for the specific emotions to which they usually refer. For

example, annoyance is one of the anger type emotions, as is

rage, because they both follow from interpretations of a situation

as an undesirable event coming about as a result of someone else's

blameworthy act.

Emotion eliciting conditions leading to emotions

fall into four major categories: those rooted in the effect of events on the goals of an agent, those rooted in the standards

and principles invoked by an accountable act of some agent, those

rooted in tastes and preferences with respect to objects

(including other agents treated as objects), and lastly, selected combinations

of the other three categories. Another way to view these categories is as

being rooted in an agent's assessment of the desirability of some

event, of the praiseworthiness of some act, of the attractiveness of some object, or of selected combinations of these

assessments.

Emotion types. As discussed above, when we speak of an

emotion generated by the system we are really talking about an emotion of

that type. It is usually not convenient (i.e., it obscures the

meaning of the text) to talk about the ``emotion type of anger'' and so

forth. However, it should be understood that throughout this text that when

we refer to some emotion, as though by name, we are actually referring to

some unspecified emotion characterized by that named emotion type.

Goals, standards and preferences databases. These

are referred to in the text as GPSs. They are the hierarchical frame

databases used to represent an agent's concern structure.

They are organized around the

three categories of an agent's concerns. They hold most of the information

used to define an agent's interpretive personality component. When

an agent is created, the GSP database must be filled in to give it a

unique set of concerns. When an eliciting situation is interpreted using

this database, attributes of the eliciting condition theory are bound to

features in the situation. See table 2.1, and

chapter 3 for discussion.

Representing concerns of others. An agent may represent the

concerns of some other agent by keeping a partial GSP database for that

other agent, and filling it in as knowledge about the agent is acquired.

Since these databases represent the concerns of other agents, with respect

to an observing agent, they are known as Concerns-of-Others,

or COO, databases.

Since they are generally learned by the agent, COOs are usually incomplete,

and may contain erroneous interpretation schemas as well. See

section 2.8 for a discussion.

Emotion Eliciting Condition Relations. The process that

matches frames in the GSP (and COO) databases against an eliciting situation

will, if the match is successful, reduce the eliciting situation to a set of

bindings. Some of the bindings represent values for two or more of the nine

attributes of a special relation known as the emotion eliciting

condition relation.

Different patterns of bindings for the attributes in this relation, and

different values, give rise to different emotions. In the text these are

abbreviated as EEC relations. See section 2.3 for

discussion.

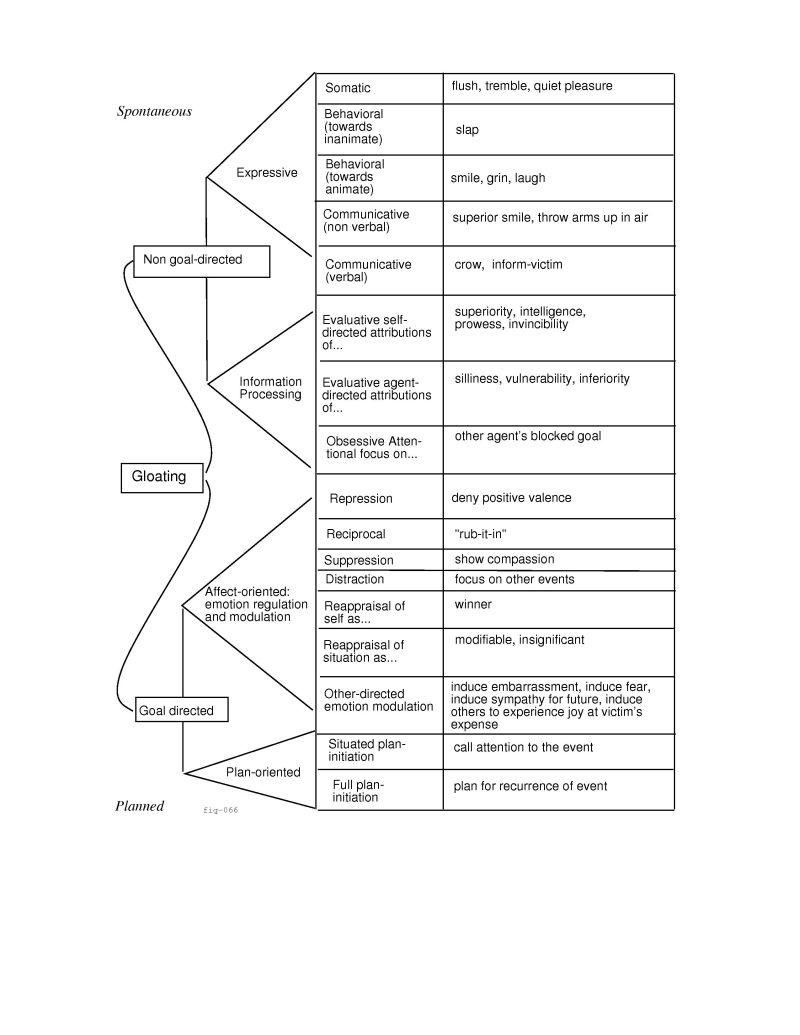

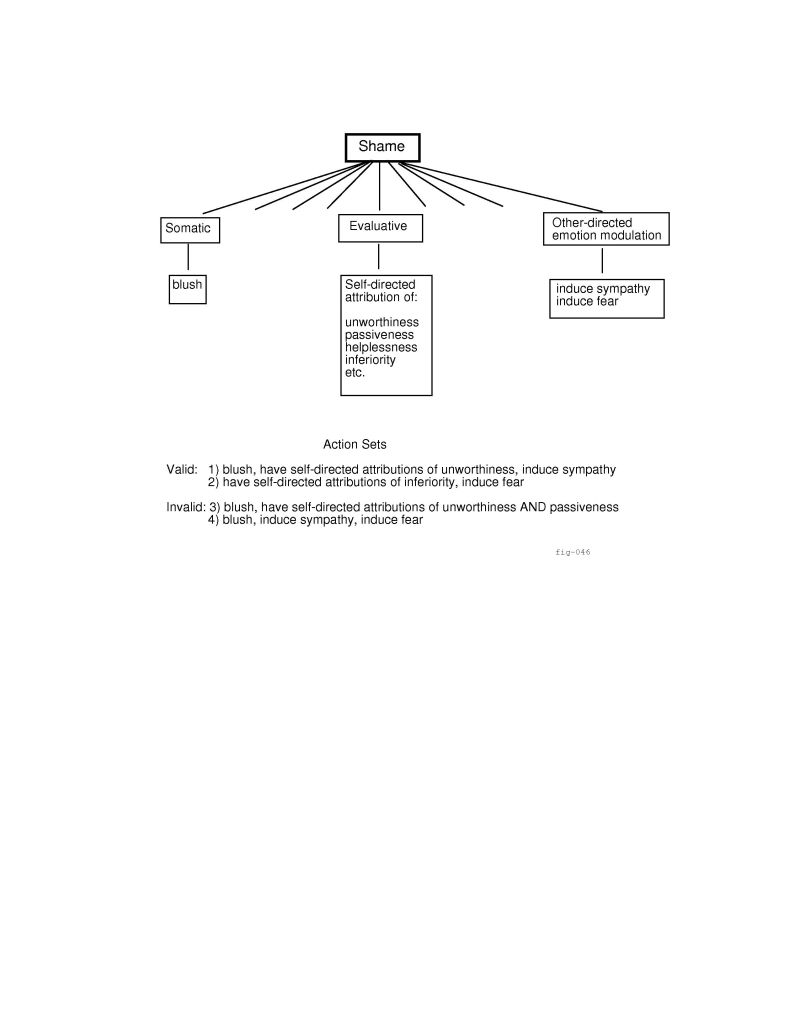

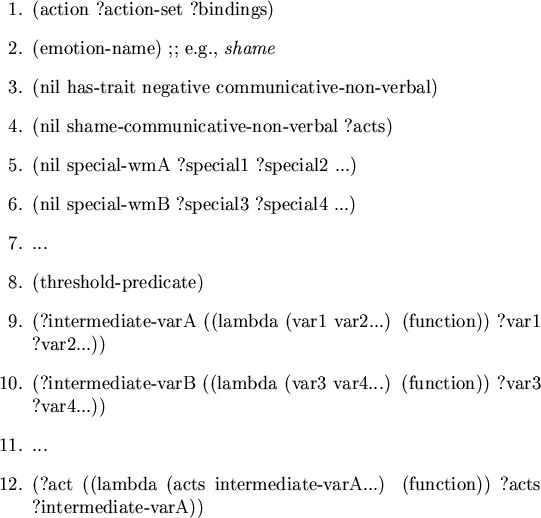

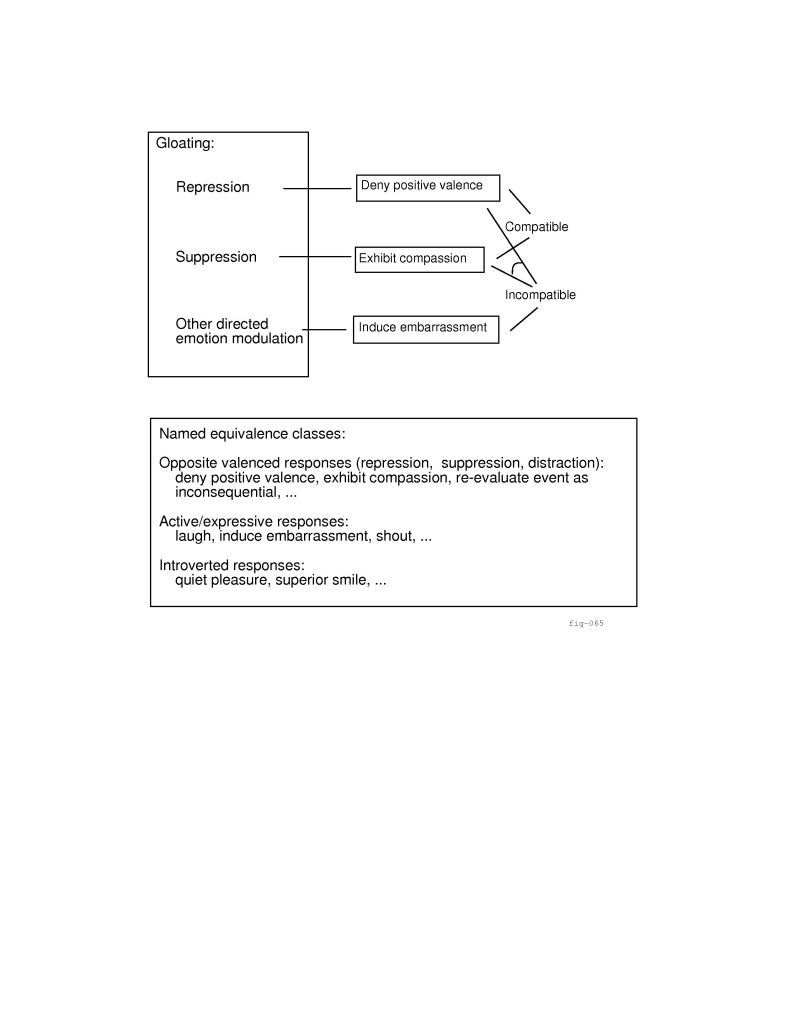

Action response categories. Once an agent is in an emotional

state, it will manifest this emotion in one way or another. Some of these

manifestations naturally fall into one functional category, while others

fall into another. For example, trembling and breaking into a

cold sweat may be categorized by the somatic characteristics they

share. We have specified approximately twenty different groups (there is

some variation between the emotions) to which the various action responses

belong. See figure 4.1 for a complete listing of the action

response categories for gloating, and section 2.6.1 and

chapter 4 for discussion.

Frame types. Construal frames, which interpret emotion

eliciting situations (also represented as frames) must be retrieved from an

agent's GSP database at the time the situations arise. The retrieval of

candidate construal forms is a feature-indexing problem. A satisfactory

solution to this problem is beyond the scope of the current research. We

sidestep it in the Affective Reasoner by simply giving each eliciting

situation a type, such as an arrival-at-destination frame

type, or a passenger-pays-driver frame type. Construal frames of a

particular type are candidates for interpreting eliciting situations of the

same type.

The structure of simulation events. The Affective Reasoner is

designed around a simulation engine. Initial simulation events are

placed in the system's event queue. When the simulation is started these

simulation events are processed, and spawn further simulation

events which are then placed in the event queue, ad infinitum. In this

manner, once the simulation is started, it continues until it is halted.

Some of the simulation events simply drive the system, moving icons around

the screen and so forth, and are not of theoretical interest. Other

simulation events have eliciting situation frames attached to them

which, when matched against the concerns of agents, can initiate emotion

processing.

2.2 The construal process

The construal process is covered in detail in

chapter 3 . Here we give a brief introduction to the

emotion eliciting condition theory from [18], and place the

construal process in the context of that theory. After this introduction we

discuss how a matcher is used to identify and instantiate internal schemas

called construal frames which are used to interpret eliciting

situations in the simulated world for the simulated agents.

When a simulation event occurs which has an attached eliciting

situation frame, agents appraise the situation's relevance to their

concerns by trying to match it against internal schemas representing the

eliciting conditions for the various emotions. Has a goal been achieved?

If so, is the goal important enough to generate an emotion? Has a standard

been violated? Who is responsible for the blameworthy act? The answers to

such questions result in an interpretation of the event with respect

to the eliciting conditions for emotions in terms of the observing agent's

concerns. If the interpretation is such that the eliciting conditions have

been met, and certain feature thresholds have been reached (e.g., was enough money lost...), then an emotion will result.

The internal schemas which map emotion eliciting situations into the

eliciting conditions for an emotion for some agent are represented as

frames, known as construal frames. These construal frames are in

an inheritance hierarchy. Leaf node frames inherit slots from ancestors, so

that many attributes need only be specified once, in some ancestor.

The frame system is based on XRL [4] but has been extended so that

slots may contain pattern-matching variables and attached procedures. Since

variables allow us to create generalized frames, many different instances of

a certain situation type may match the same construal frame, albeit with a

different set of resultant bindings for the variables produced by the match.

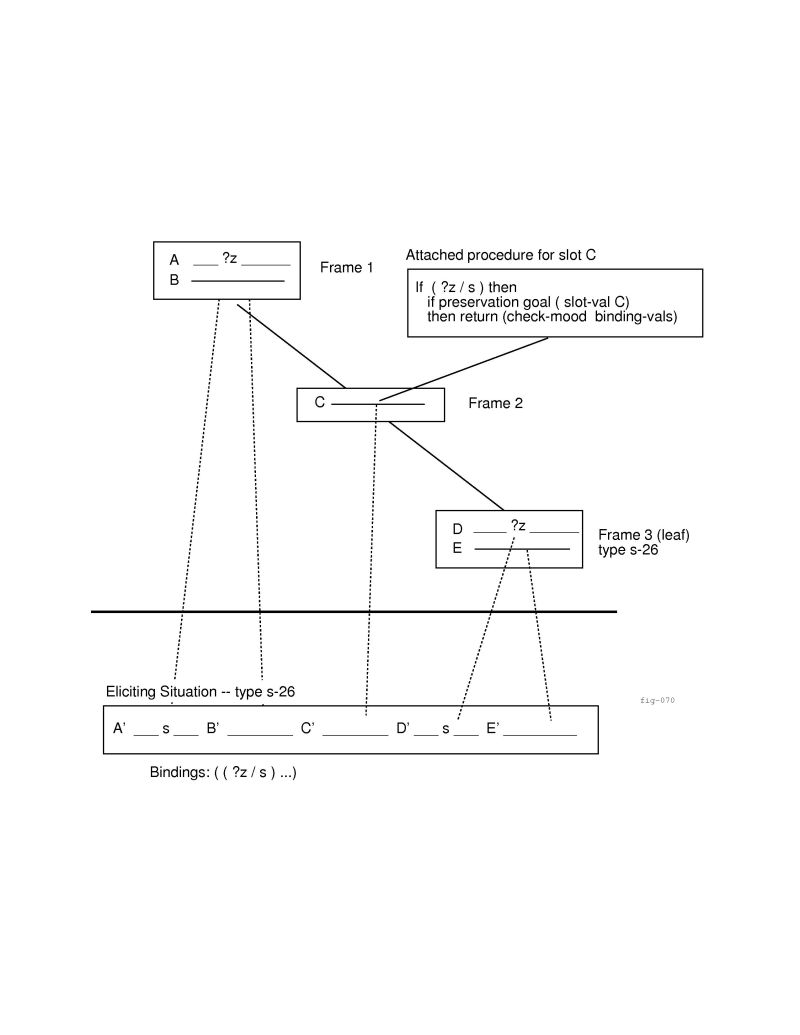

Figure 2.2 shows how inherited slots are used to match

eliciting situation frames. In this figure the slots  through

through  come

from three different frames. Frame 3 is the leaf node construal frame,

and should be thought of, conceptually, as containing all five slots. These

five slots match the slots for the eliciting situation, pictured at the

bottom of the illustration. Note that the hierarchical nature of the GSP

database is purely for the convenience of capturing an agent's concerns in

frames. There is no functional reason for the hierarchy (i.e., there is no

run-time processing dependent upon the hierarchy); the hierarchies of frames

could be compiled by collapsing them into ``flat'' representations of the

leaf node frames, so that inherited slots would be propagated down and

included in each of the containing frame's leaf-node descendants.

come

from three different frames. Frame 3 is the leaf node construal frame,

and should be thought of, conceptually, as containing all five slots. These

five slots match the slots for the eliciting situation, pictured at the

bottom of the illustration. Note that the hierarchical nature of the GSP

database is purely for the convenience of capturing an agent's concerns in

frames. There is no functional reason for the hierarchy (i.e., there is no

run-time processing dependent upon the hierarchy); the hierarchies of frames

could be compiled by collapsing them into ``flat'' representations of the

leaf node frames, so that inherited slots would be propagated down and

included in each of the containing frame's leaf-node descendants.

Figure 2.3:

Inheritance of slots in a construal frame

|

Once candidate frames have been selected as potential matches for an

eliciting situation (using the situation frame type as discussed above), the

matcher comes in to play. This matcher uses a specialized unification

algorithm, and is discussed in chapter 3. If a match

between the emotion eliciting situation frame and a particular construal

frame succeeds then the eliciting situation is considered to be of concern

to the agent, as interpreted by the construal frame. At the same time, a

set of bindings is produced from the unification of the pattern-matching

variables and the features of the situation, and any attached procedures

(see below). The feature values contained in these bindings, and

specifications within the construal frame, are used to determine the exact

nature of the eliciting situation with respect to the agent's concerns. In

addition, these bindings are later used to specify the

possible responses as well. Output from the match process is either an

indication that the match has failed, or an instantiated construal frame

containing bindings for eliciting condition attributes such as desirability

for the agent, praiseworthiness of the act, attractiveness of the object,

and so on.

In figure 2.3, Frame 2 is shown with a

procedure attached to slot  . Such procedures allow working memory

values to be incorporated into the match process. They also allow

fine tuning of the match process by calling predicate functions

which may base decisions on the current set of bindings. Finally,

these attached procedures may also contribute to the current set

of bindings, since the (new) set of bindings is returned from the

procedure calls.

. Such procedures allow working memory

values to be incorporated into the match process. They also allow

fine tuning of the match process by calling predicate functions

which may base decisions on the current set of bindings. Finally,

these attached procedures may also contribute to the current set

of bindings, since the (new) set of bindings is returned from the

procedure calls.

Frame 1, slot A and Frame 3, slot D are shown as

sharing a common variable ?z. In the eliciting situation, this

feature is instantiated as  in slots A' and D'. During

the match, since

in slots A' and D'. During

the match, since  unifies with

unifies with  , this portion of the

unification process succeeds and a binding of the variable ?z to the

constant

, this portion of the

unification process succeeds and a binding of the variable ?z to the

constant  is created and added to the bindings list.

is created and added to the bindings list.

An important point to note is that within this context, no

event has meaning to an agent until after it has been filtered through the

concerns of that agent. Without going into the philosophical

foundations of this argument

(but see [22] for a discussion of this) it should be evident

that people work this way: extraneous cognitive and perceptual information

is filtered out of the input stream, and the relevant situations that do

pass this filter are sent on, along with the interpretation of why

they are relevant. Not all interpretations are relevant to the human

affective machinery (i.e., the information that a room is dark and that the

light needs to be turned on is not likely to cause an emotional response),

but a significant amount of binding to affective inference structures occurs

at the time eliciting situations are assessed. For example, affective

states are intertwined with expectations. To form a match between a stored

expectation and some current situation for the purpose of (dis)confirming

the expectation, one must bind features of the new situation to the expected

facilitation or blocking of the stored goals, to the expected upholding or

violation of the stored standards, and so forth.

2.3 Emotion Eliciting Condition relations

Once an initial interpretation has been made, Emotion Eliciting

Condition (EEC) relations are constructed. These are, essentially, a set

of features derived from eliciting situations and their interpretation

which, taken as a whole, may meet the eliciting conditions for one or more

emotions. Different patterns of features lead to different emotions. The

source of these features varies. Some features, such as the names of agents,

are directly derived from the situation. Other features, such as the desirability of the event, are derived from matching the situation against

the agent's concern structure (i.e., they are contained in the bindings

produced when a construal frame from the agent's GSPs is matched against the

situation frame); still other features, namely pleasingness and status, are dependent upon dynamic information and so must be derived

partially from working memory. The complete set of features comprising the

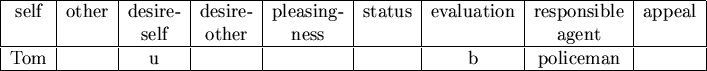

EEC relation is shown in figure 2.4, and is discussed

below:

Figure 2.4:

The Emotion Eliciting Condition Relation

| self |

other |

desire-self |

desire-other |

pleasingness |

status |

evaluation |

responsible agent |

apealingness |

| (*) |

(*) |

(d/u) |

(d/u) |

(p/d) |

(u/c/d) |

(p/b) |

(*) |

(a/u) |

| Key to attribute values |

| abbreviation |

meaning |

* |

some agent's name |

d/u |

desirable or undesirable (event) |

p/d |

pleased or displeased about another's fortunes (event) |

p/b |

praiseworthy or blameworthy (act) |

a/u |

appealing or unappealing (object) |

u/c/d |

unconfirmed / confirmed, or disconfirmed |

- Self. This attribute represents the identity of the

agent experiencing the emotion. The value for this attribute is derived from

the situation. It is always bound to an agent's name. If two agents are

involved in some situation, and it is relevant to the concerns of each of

them, then two sets of emotion eliciting condition relations will be

produced, with self bound to a different agent in each of the

different sets. Three agents will yield three sets, and so on.

- Other. This attribute represents the identity of some

other agent about whose fortunes the self agent may have an emotion.

Such an emotion will result only when pleasingness (for self

- see below) is also bound, on the basis of a relationship stored in

working memory. The value for this attribute is derived from the situation.

When the attribute is bound, it is always bound to an agent's name, but it

is never the same value as self.

- Desire-self.

This attribute represents the self agent's assessment of the

eliciting situation as an event. If he interprets the situation as one

where a goal of his is blocked then the value of this attribute is undesirable; if he interprets it as one where a goal is achieved

the value is desirable. If he does not interpret the eliciting

situation as relevant to its goals then this attribute is not bound. The

value for this attribute is derived from the agent's construal of the

eliciting situation.

- Desire-other.

This attribute represents the self agent's assessment of the eliciting

situation as an event relevant to the desires of another agent. If a self agent has a Concerns-of-Other representation for some other agent

(either specific or default - see section 2.8), he can interpret

that agent's reaction to a situation by ``imagining'' what it is like for

the other agent. If it is perceived that a goal of the other agent is

blocked the value of this attribute is undesirable, if it is

perceived as having been achieved, the value is desirable, otherwise

the attribute is not bound. The value for this attribute is derived from the

agent's construal of the eliciting situation.

- Pleased.

This attribute represents the valence of the self agent's

response to the emotions of another agent.

It is used strictly in situations in which two conditions hold: (1) an

eliciting situation perceived as a goal-relevant event ``happens'' to

another agent (bound to the attribute other), and (2) the agent

bound to the attribute self is related to the agent bound to other either by friendship or animosity. Once the value

of other has been determined from the eliciting situation, the value

of desire-other has been determined from a Concerns-of-Other

representation (chapter 2.8), and the relationship (friendship or

animosity) has been determined from working memory, the value of pleased can be determined. For example, if an agent has a friend, and the

agent observes some situation in which he perceives his friend to have

achieved one of his (the friend's) goals, the agent can be pleased about it.

This attribute is derived from the desire-other attribute in

conjunction with working memory.

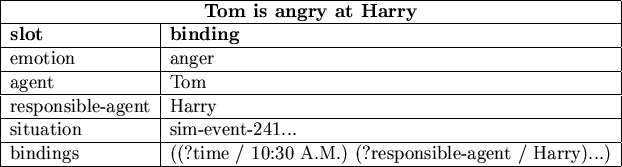

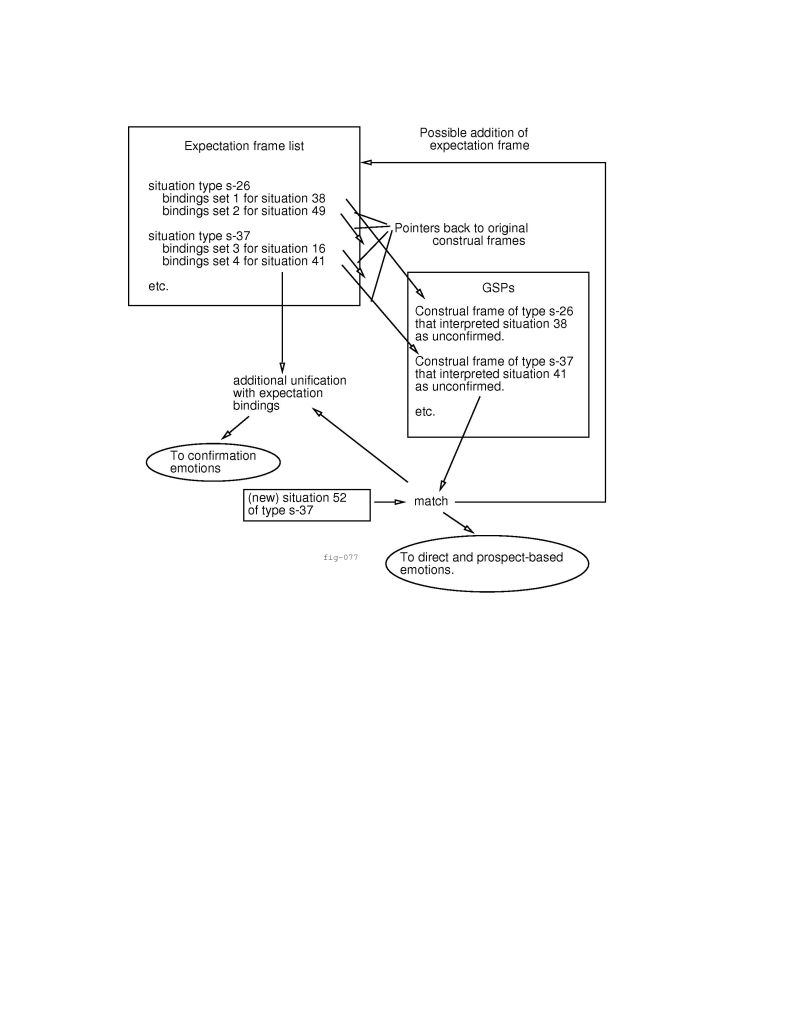

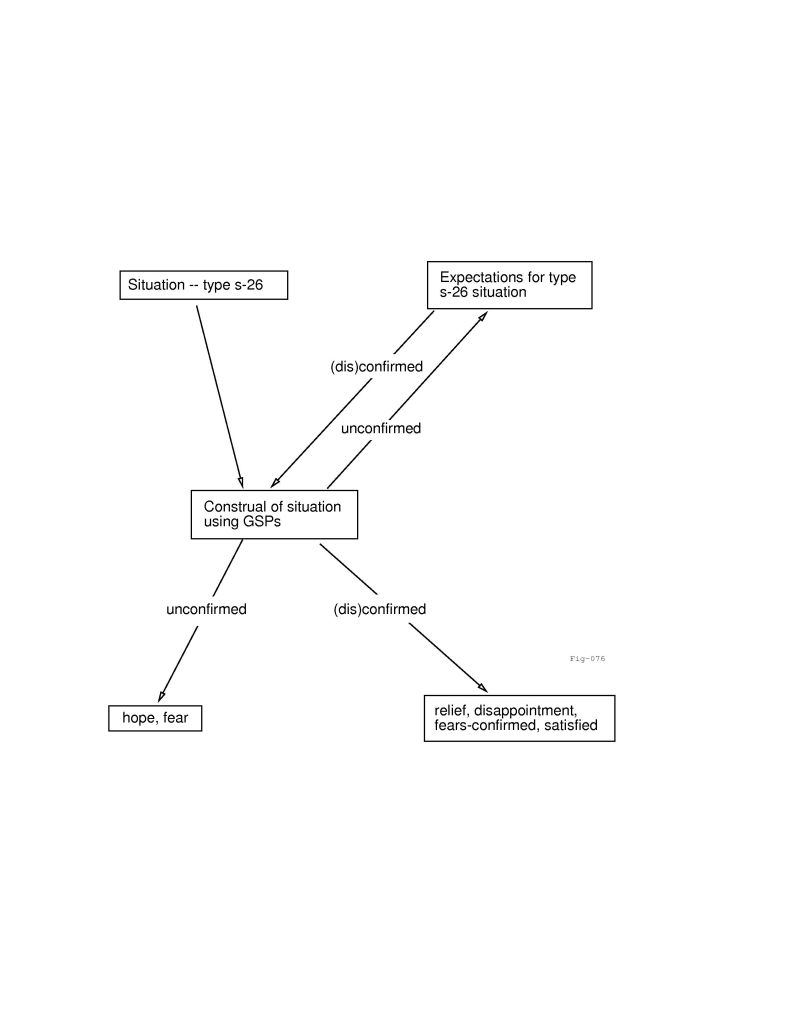

- Status.

When bound, this attribute represents the status of an expectation. When

a situation perceived as an event takes place, in most cases it has no status attribute associated with it (i.e., the status attribute is not

bound). The question of expectations does not arise - an event just happens

and that is the end of it. On occasion, however, events may lead to

expectations on the part of agents. They may also confirm or

disconfirm prior expectations. The representation of such expectations is

problematic and is treated in depth in section 3.3.

Briefly, a situation perceived as an event may be unconfirmed, meaning

that the self agent perceives it as possible, but as not yet

actually having taken place. Once an agent has had this perception, a schema

representing the expected outcome is stored in the

agent's expectation database. At this point, if some situation matching this